Difference between revisions of "Scientific Figures"

| Line 109: | Line 109: | ||

''Publication bias'' is a bias with regard to what is likely to be published, among what is available to be published. Not all bias is inherently problematic, for instance, a bias against publishing lies is a good bias, but one very problematic, and much discussed bias is the tendency of researchers, editors, and pharmaceutical companies to handle the reporting of experimental results that are positive (i.e. showing a significant finding) differently from results that are negative (i.e. supporting the null hypothesis) or inconclusive, leading to a misleading bias in the overall published literature. Such bias occurs despite the fact that studies with significant results do not appear to be superior to studies with a null result with respect to quality of design.[ | ''Publication bias'' is a bias with regard to what is likely to be published, among what is available to be published. Not all bias is inherently problematic, for instance, a bias against publishing lies is a good bias, but one very problematic, and much discussed bias is the tendency of researchers, editors, and pharmaceutical companies to handle the reporting of experimental results that are positive (i.e. showing a significant finding) differently from results that are negative (i.e. supporting the null hypothesis) or inconclusive, leading to a misleading bias in the overall published literature. Such bias occurs despite the fact that studies with significant results do not appear to be superior to studies with a null result with respect to quality of design.[ | ||

| + | |||

| + | ===The dubious magic of meta-analysis=== | ||

| + | ''Meta-analysis'' is a statistical technique used to pool results from different studies. Originally it was developed for summarizing the results of homogeneous randomized clinical trials, a use that remains its legitimate application. However, using meta-analysis for pooling the results of diverse observational PS studies of contrasting outcomes is fraught with irresolvable difficulties. | ||

| + | |||

| + | The procedure gives different weights to studies, primarily in relation to their size. However, meta-analysis does not pool the discrete data that originated each result, but only the final results of each study regardless of whether concordant or discordant, credible or not. The procedure does not discriminate for characteristics of each study, such as design, data collection, standardizations, biases, confounders, adjustments, statistical procedures, etc. Meta-analysis, therefore, produces only a weighted average of the final numerical results of the studies, but does not standardize, relieve, or control for differential corruptions that may be present in each study. If characteristics other than study size are used in weighing studies (e.g. an estimate of study quality), those characteristics are likely discretionary, judgmental, and conducive to different meta-analysis results at the hands of different analysts. | ||

| + | |||

| + | Therefore, with the exception of its use for summarizing homogeneous randomized clinical studies, it is abundantly clear that meta-analysis can be used as a stratagem to contrive meaning from studies that have no apparent meaning. | ||

Revision as of 23:26, 12 June 2012

Contents

Interpreting scientific figures

Epidemiologic studies of passive smoke (ETS) – not the same as science

The epidemiology of ETS claims correlations of ETS exposure with cancer, cardiovascular, and other diseases that are not caused by single entities such as viruses or bacteria, but depend on a constellation of possible causes, none either necessary or sufficient. Laboratory and clinical studies have proven unable to determine specific causal mechanism for such diseases. In this regard, Doll and Peto, arguably the most prominent epidemiologists today, have concluded that:

- [E]pidemiological observations...have serious disadvantages... [T]hey can seldom be made according to the strict requirements of experimental science and therefore may be open to a variety of interpretations. A particular factor may be associated with some disease merely because of its association with some other factor that causes the disease, or the association may be an artefact due to some systematic bias in the information collection……..

- [I]t is commonly, but mistakenly, supposed that multiple regression, logistic regression, or various forms of standardization can routinely be used to answer the question: “Is the correlation of exposure (E) with disease (D) due merely to a common correlation of both with some con¬founding factor (or factors)?”

- ... Moreover, it is obvious that multiple regressions cannot correct for important variables that have not been recorded at all.”…….[T]hese disadvantages limit the value of observations in humans, but...until we know exactly how cancer is caused and how some factors are able to modify the effects of others, the need to observe imaginatively what actually hap¬pens to various different categories of people will remain.” *

- * (Doll R, Peto R, The causes of cancer, JNCI 66:1192-1312, 1981. p. 1281)

(The “multiple regression” and “logical regression” referred to in the quotes above are techniques used in the statistics of epidemiology.)

Thus, while epidemiologists insist that their discipline is a science, clearly it is not a mainstream experimental science that produces reliable causal connections that could justify public and private policies.

Study types in epidemiology

Epidemiologic risks in general are estimated from observing differences in the frequency with which diseases appear (incidence) among groups more or less exposed to whatever agent the researchers are studying. Various types of studies are used in epidemiology, but only two have been used in the case of PS. Let’s briefly examine how these studies are conducted, and what they actually measure.

Retrospective cohort (or longitudinal) studies

- These studies record different individual recalls of disease incidence in groups of people possibly exposed to PS to varying degrees during the previous course of their lifetimes. In such studies, risk is estimated from differences of incidence in relation to differences in PS exposure. Only a handful of such studies have been performed in regard to PS.

Case-control studies

- These constitute by far the majority of PS studies. They record different individual recalls of possible lifetime PS exposure in two groups of people. One of these groups is composed exclusively of subjects all having the disease under study (lung cancer, for instance): this group is called the cases. The other is composed of subjects who are all free of the disease under study: this group is called the controls.

- In case-control studies the incidence is 0% in the controls and 100% in the cases. Therefore, a key understanding is that in such studies risks are conjectured as differentials of exposure recall, and not actually estimated as differentials of disease incidence. Increased risk is inferred but not directly estimated if exposure is found to be higher among cases, and protection is inferred but not directly estimated if exposure is found to be higher among controls.

Please note that the term “individual recall” means the recollections of individual people concerning the phenomenon that the researchers are interested in. In other words, researchers in these studies use people’s memories as to guess the actual amount of second-hand smoke that they were exposed to, and the comparison of the case and control groups is based on this recollection. Obviously, this fact alone is a considerable “wild card” when it comes to the reliability of the basic data upon which the study depends.

Relative Risk/Odds Ratio

The arithmetic of risk calculation is the same for cohort and case-control studies, except for a difference in terminology. In cohort studies the ratio used for risk calculation is called RR (relative risk), while in case control studies the ratio is called OR (odds ratio).

However, the important difference is that the cohort studies estimate risk directly as differentials of disease incidence, since cohort studies observe only the health outcomes that could be associated with exposure to a particular factor. The case-control studies only assume risk from differentials of exposure, since they are designed to observe the exposure that subjects may have had in the past to the factor of interest.

Let us go into a bit more detail as to how the calculations are done – but don’t worry, it is simple arithmetic!

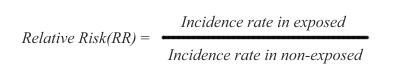

In cohort studies, risk is measured as a difference in disease incidence between exposed and non-exposed subjects. The risk is defined as relative risk (RR), and it is expressed this way:

Thus, the disease incidence rate in the exposed subjects is simply divided by the incidence rate in non-exposed subjects. The RR ratio reflects that a certain incidence of disease is observed in both non-exposed and exposed subjects, due to multiple background causes operating in conjunction with, or entirely separate from the exposure under study. Therefore, risk in the exposed is said to be an increment or decrement of incidence, relative to the basic incidence of the non-exposed subjects.

In the RR ratio above, if the rates are the same in exposed and non-exposed subjects, the RR=1 and therefore there is no risk differential. If RR is greater than 1, the risk is said to be increased in the exposed subjects. If RR is smaller than 1, the risk is said to be decreased in the exposed subjects, indicating that the exposure under study might be possibly protective.

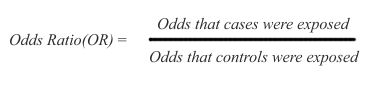

Because case-control studies infer but do not directly estimate possible risk, their results are expressed as odds ratios (OR), namely the ratio between the odds (expressed as % or other rate) of being exposed for the cases and the controls:

In the above ratio, if the odds are the same in exposed and non-exposed subjects, the OR=1 and there is no inference of difference in risk. If OR is greater than 1, there is an inference of increased risk in the cases. If OR is smaller than 1, there is an inference of decreased risk for the cases, presuming that the exposure may possibly protect for the disease under study.

Both cohort and case-control studies are affected by similar difficulties of design, data collection, and interpretation — difficulties that are far worse for case-control studies that uniquely rely on vague recollections of exposure.

How to interpret scientific epidemiology reports?

| Hypothesis |

| An original assumption that must be demonstrated or rejected through experimentation. |

The reliability of any empirical evidence, scientific or not, depends on having met three basic benchmarks:

- An assurance of identity, namely that what is being measured is indeed what is claimed to be measured, and measured with sufficient accuracy.

- An assurance of the absence of other explanations, namely that the effects observed are due exclusively to what is being measured (exposure to ETS, in our case), and not to other disturbances that interfere with the observations and may alter and confound the results.

- An assurance of consistency, namely that results are consistently reproduced by different reports.

Without meeting these three guarantees, no hypothesis can aspire to reach any degree of credible evidence, and cannot be credibly taken as the basis for reasoned policy decisions, either public or private.

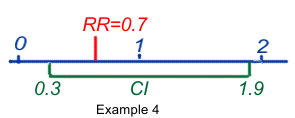

The important figures in a published scientific report are the Relative Risk (RR) or Odds Ratio (OR) together with the Confidence Interval (CI). The CI defines the upper and lower bounds of the published RR or OR, indicating that there is a 95% chance that the real value of the RR or OR is located beween these borders.

Scientists have defined a rule of thumb for the values of RRs or ORs:

"In epidemiologic research, [increases in risk of less than 100 percent] are considered small and are usually difficult to interpret. Such increases may be due to chance, statistical bias, or the effects of confounding factors that are sometimes not evident". Source: National Cancer Institute, Press Release, October 26, 1994

"As a general rule of thumb, we are looking for a relative risk of 3 or more before accepting a paper for publication." - Marcia Angell, editor of the New England Journal of Medicine

"My basic rule is if the relative risk isn't at least 3 or 4, forget it." - Robert Temple, director of drug evaluation at the Food and Drug Administration.

"An association is generally considered weak if the odds ratio [relative risk] is under 3.0 and particularly when it is under 2.0, as is the case in the relationship of ETS and lung cancer." - Dr. Kabat, IAQC epidemiologist

So let's have a look at some examples:

- RR or OR = 1.9 (95%CI 1.2-4.6) means that the best estimate of the risk may be 1.9, but that its true value could be between 1.2 and 4.6, with a probability of 95%. It also means that within that range all values are statistically significant at the 95% level, because all would mean an increase of risk, the lowest value still being >1.

- RR or OR = 1.9 (95%CI 0.7–2.3) ) means that the best estimate of the risk may be 1.9, but that its true value could be between 0.7 and 2.3, with a probability of 95%. It also means that some values could be <1 and could mean protection, others could be >1 and could mean risk. As a consequence the result is said to be equivocal and not statistically significant.

- RR or OR = 0.7 (95%CI 0.2-0.9) ) means that the best estimate of the risk may be 0.7, but that its true value could be between 0.2 and 0.9, with a probability of 95%. It also means that within that range all values are statistically significant at the 95% level, because all would mean a reduction of risk, the highest value still being <1.

- RR or OR = 0.7 (95%CI 0.3-1.9) means that the best estimate of the risk may be 0.7, but that its true value could be between 0.3 and 1.9, with a probability of 95%. It also means that some values could be <1 and could mean protection, others could be >1 and could mean risk. As a consequence the result is said to be equivocal and not statistically significant.

Confounders or co-factors

Confounders are defined as hidden risk factors that could also participate in an association. Study subjects with cancer must, as a matter of course, have been more exposed to other cancer risk factors than the healthy controls – and there are many risks for lung cancer that studies in general do not bother to control.

For instance, ETS studies dealing with lung cancer should consider some three dozen risk factors as potential confounders reported in the literature, and studies of cardiovascular conditions face over 300 published accounts of risk factors as potential confounders. It should be apparent that without a credible control for at least all major known confounders, epidemiologic studies of ETS could not be validly interpreted.

And even when these confounders all are taken into account, what factor was the decisive one that triggered the cancer?

Compare it with glass of water: if you move one lighter under it, it may never start to cook. Add a second lighter under it and it may cook in 100 years. Maybe after the tenth or the twentieth one has been lighted under the glass of water, the water will start to cook within a lifetime. But which lighter was the important one? None probably, as they all have worked together to heat the water.

Bias: Corrupting influences

Biases are common. In simple terms, a bias is a type of error that alters the base of comparison in the study to some extent and thus “throws the results off”. When we want to compare two groups of people in an effort to get information about the possible effect of a particular factor upon them, we must start by comparing “apples with apples” as much as possible. For the sake of illustrating the point, let us imagine an extreme example of bias: the comparison of a group of eight-year-old girls with an 80-year-old group of male former prisoners of war! The differences between these groups are so extreme as to such factors as age, life experience and medical history that any comparison would hopelessly compromise the results of the study.

A selection bias occurs when control subjects mismatch the test or case subjects in regard to characteristics that cannot be adjusted for age, gender, etc. In fact, selection bias can only be reduced, for it is impossible to eliminate. Its presence can only be guessed but not measured with any precision.

Information bias relates to inevitable inaccuracies in data collection.

Recall bias – that is, inaccurate data resulting from people’s inaccurate memories -- is most frequent, and is of special concern in case-control studies, where cases with a disease are apt to recall more intense and longer exposures than the controls without the disease, thus contributing to a false appearance of increased risk. Recall bias and error may be increased when exposure information is retrieved from next of kin of deceased subjects. In general, recall data are based exclusively on vague individual recall statements, and no verification is possible. It is only natural that persons with cancer or other diseases would be more inclined than persons without disease to blame PS exposure, in an effort to rationalize their disease and explain it.

Differential accuracy of disease diagnostics and death certificates may affect the classification of subjects. A misclassification bias occurs when subjects wrongly declared themselves to be non-smokers and are mistakenly classified as such. The tendency to cheat and misclassify themselves as non-smokers would be naturally more prevalent among subjects with cancer or other diseases than in control subjects that are otherwise healthy, thus contributing to a false impression of elevated risk.

Publication bias is a bias with regard to what is likely to be published, among what is available to be published. Not all bias is inherently problematic, for instance, a bias against publishing lies is a good bias, but one very problematic, and much discussed bias is the tendency of researchers, editors, and pharmaceutical companies to handle the reporting of experimental results that are positive (i.e. showing a significant finding) differently from results that are negative (i.e. supporting the null hypothesis) or inconclusive, leading to a misleading bias in the overall published literature. Such bias occurs despite the fact that studies with significant results do not appear to be superior to studies with a null result with respect to quality of design.[

The dubious magic of meta-analysis

Meta-analysis is a statistical technique used to pool results from different studies. Originally it was developed for summarizing the results of homogeneous randomized clinical trials, a use that remains its legitimate application. However, using meta-analysis for pooling the results of diverse observational PS studies of contrasting outcomes is fraught with irresolvable difficulties.

The procedure gives different weights to studies, primarily in relation to their size. However, meta-analysis does not pool the discrete data that originated each result, but only the final results of each study regardless of whether concordant or discordant, credible or not. The procedure does not discriminate for characteristics of each study, such as design, data collection, standardizations, biases, confounders, adjustments, statistical procedures, etc. Meta-analysis, therefore, produces only a weighted average of the final numerical results of the studies, but does not standardize, relieve, or control for differential corruptions that may be present in each study. If characteristics other than study size are used in weighing studies (e.g. an estimate of study quality), those characteristics are likely discretionary, judgmental, and conducive to different meta-analysis results at the hands of different analysts.

Therefore, with the exception of its use for summarizing homogeneous randomized clinical studies, it is abundantly clear that meta-analysis can be used as a stratagem to contrive meaning from studies that have no apparent meaning.